.svg)

Product

Solutions

Featured:

Read how a Cincinnati hospital system slashed contract review time by 75% with LegalOn.

Resources

Company

With every major model release, the same question lands in our inbox: Is this one better for legal?

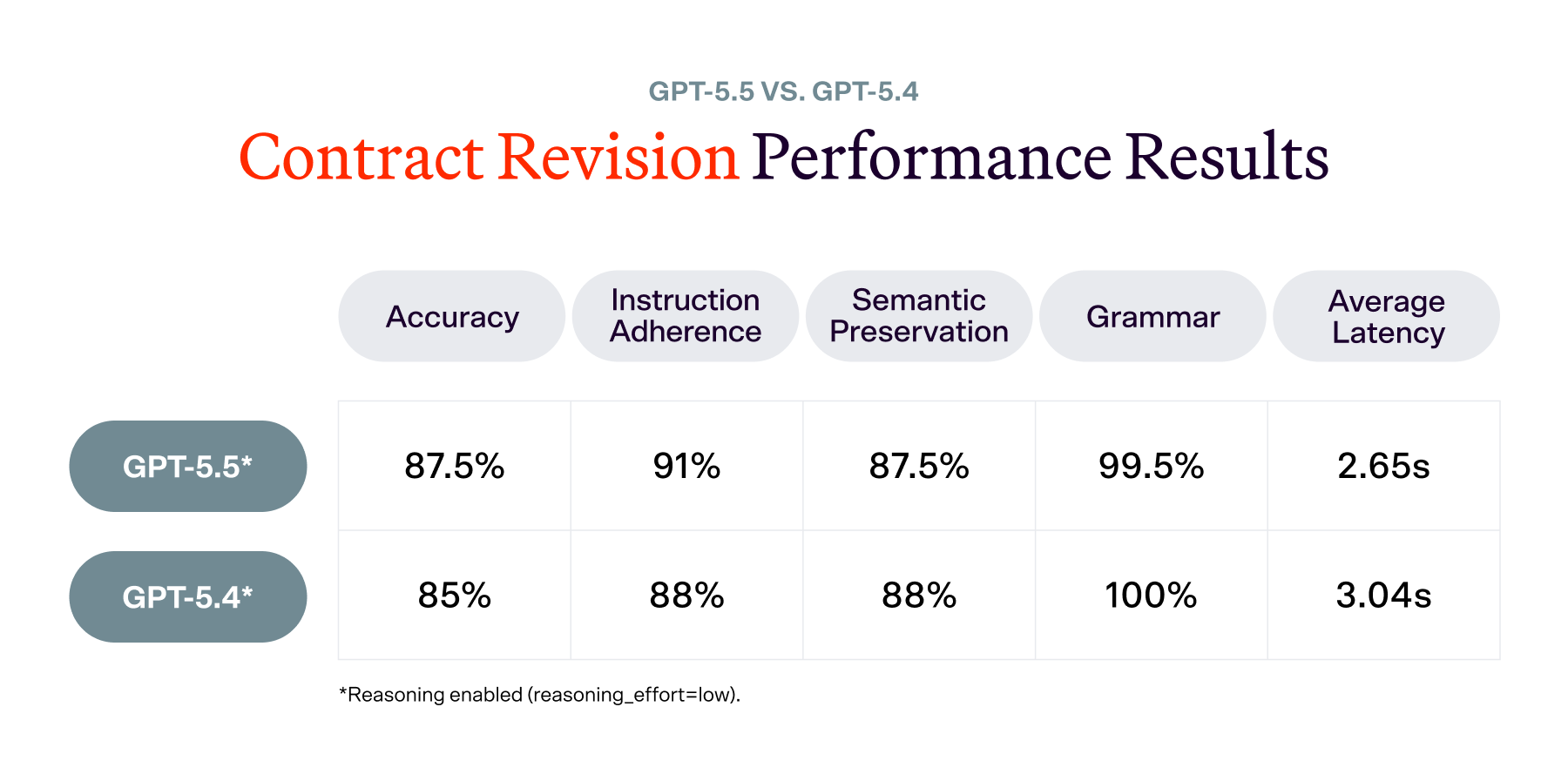

For GPT-5.5, the answer depends entirely on what you are asking it to do. We ran it against our contract revision and review benchmarks, and the results split in a way that is worth understanding before your team makes any deployment decisions.

Our AI Revise benchmark covers 200 English contract revision examples spanning NDAs, MSAs, and other commercial agreements.

Each revision is independently scored on three criteria:

A revision passes only if all three criteria are met.

The improvement shows up most clearly in how each model handles common legal revision tasks. On term revisions, converting indefinite obligations into bounded durations, GPT-5.5 more reliably uses standard contract phrasing.

A representative example: when revising "shall continue indefinitely" to a fixed term, GPT-5.4 produced "until [X] years from the Effective Date" while GPT-5.5 produced "for [X] years from the Effective Date." That distinction matters. "For" correctly pairs with a duration and follows standard legal drafting conventions, while "until" typically refers to a point in time and reads less naturally.

TERM REVISION EXAMPLE

Original shall continue indefinitely

GPT-5.4 until [X] years from the Effective Date

GPT-5.5 for [X] years from the Effective Date

On liability edits, GPT-5.5 more often makes the requested change cleanly without introducing formatting noise or unnecessary rewrites, which makes the output easier for legal teams to accept with minimal cleanup.

Where GPT-5.4 occasionally produces the better revision (roughly 1 in 16 examples), it tends to show slightly better contextual awareness.

On a confidentiality clause revision, for instance, GPT-5.4 integrated the fix within the existing sentence structure while maintaining focus on the section's specific obligations. GPT-5.5, by contrast, replaced the sentence entirely with generic agreement-term language, losing the contextual connection. That tradeoff — cleaner instruction-following at the occasional cost of broader document awareness, is where GPT-5.5 still has room to grow.

In short: GPT-5.5 is a meaningful upgrade for English contract revision. The improvement is driven primarily by stronger instruction adherence, while semantic preservation and grammar remain broadly stable. Both models were tested under identical low-reasoning settings with no custom prompts.

Our contract review benchmark covers 494 guideline-contract decisions across 26 precision-critical guidelines, spanning BAAs, MSAs, NDAs, clinical trial agreements, and commercial leases.

The task is binary: for each guideline-contract pair, the model determines whether the contract meets or fails the requirement.

The accuracy drop is driven primarily by an increase in false positives, cases where GPT-5.5 flags a compliant contract as non-compliant. Of 494 predictions, GPT-5.5 introduced 39 regressions against 24 improvements, for a net of 15 additional errors.

Here is where those errors are concentrated:

BAA: "Business Associate workforce" language (4 regressions, all false positives)

"The contract must include the following, or substantially similar, language: 'Business Associate, along with its Workforce members...'"

GPT-5.5 becomes overly strict on "substantially similar" matching, flagging contracts that GPT-5.4 correctly recognized as compliant. This is the clearest example of the false positive pattern driving the overall regression.

NDA: No obligation to enter further agreements (3 regressions, all false negatives)

"Make sure there is language clarifying that the Agreement doesn't obligate the parties to enter into further agreements."

GPT-5.5 incorrectly marks these contracts as meeting the requirement when the clarifying language is actually absent. This is an absence-detection failure: the model reads past a gap that GPT-5.4 caught.

BAA: Defined capitalized terms (3 improvements)

"All capitalized terms that are not proper nouns are defined in the agreement."

GPT-5.5 correctly identifies missing term definitions that GPT-5.4 overlooked, catching UNMET contracts that GPT-5.4 incorrectly passed.

NDA: Bilateral structure or disclosing party identified (3 improvements)

"The NDA must be bilateral or the disclosing party must be identified."

GPT-5.5 better detects whether the NDA structure is bilateral, both catching missing bilateral language and correctly recognizing it when present. This is one area where its stronger clause-detection ability comes through.

The improvement pattern shows GPT-5.5 is better at detecting certain absent clauses, but the gains are not enough to offset the increased false alarm rate, particularly in MSA and BAA contract types.

The pattern of newer OpenAI models not consistently improving on structured legal compliance tasks continues with GPT-5.5. GPT-5.5 sits between GPT-5.4 and GPT-5.2 in accuracy on this benchmark, above the earlier baseline but below the current best.

In short: GPT-5.5 is not an upgrade for contract review under naive conditions. It regresses 3pp from GPT-5.4, driven by increased false positives in BAA and MSA contract types. Enabling reasoning does not recover the gap and is not a useful trade-off in production. Both models were tested under identical conditions with no custom prompts or fine-tuning.

We will compare GPT-5.5 against our full model leaderboard, including higher-reasoning configurations and the strongest current non-OpenAI models.

We will also publish more about how each benchmark is built, and why we believe task-specific evaluation is the only honest way to measure AI for legal work.

Follow LegalOn on LinkedIn for updates.

*Revision results reflect the AI Revise benchmark (n=200, low-reasoning settings, no custom prompts). Review results reflect a 26-guideline precision-critical subset (n=494 guideline-contract pairs, naive one-shot prompting). Both benchmarks use human-annotated ground truth.

Credits: Gabor Melli, Deddy Jobson, Sonny Chee, and Petrie Wong